-

Membership

Membership

Anyone with an interest in the history of the built environment is welcome to join the Society of Architectural Historians -

Conferences

Conferences

SAH Annual International Conferences bring members together for scholarly exchange and networking -

Publications

Publications

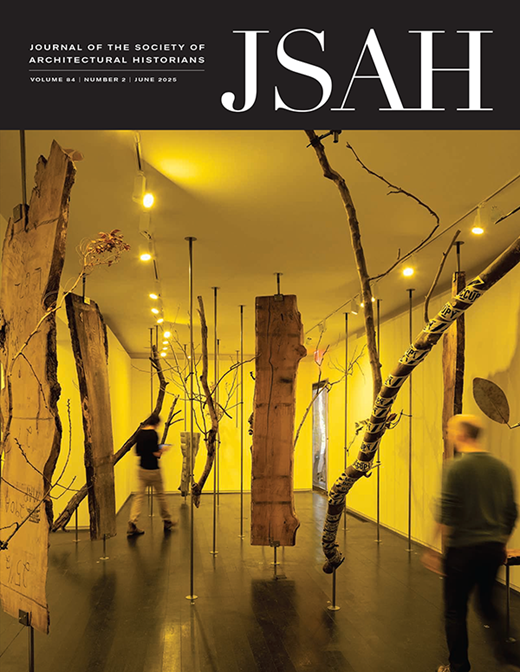

Through print and digital publications, SAH documents the history of the built environment and disseminates scholarshipLatest Issue:

-

Programs

Programs

SAH promotes meaningful engagement with the history of the built environment through its programsMember Programs

-

Jobs & Opportunities

Jobs & Opportunities

SAH provides resources, fellowships, and grants to help further your career and professional life -

Support

Support

We invite you to support the educational mission of SAH by making a gift, becoming a member, or volunteering -

About

About

SAH promotes the study, interpretation, and conservation of the built environment worldwide for the benefit of all

Revising the Institutional Survey: Less Can Lead to More

Welcome to The SAH Data Project’s process blog, a series of short-form reflections and interviews about the Society’s study of architectural history in higher education. By Sarah M. Dreller, Postdoctoral Researcher in the Humanities. #SAHDataProject

Like many of you, the SAH Data Project team has also spent the past few months assessing which aspects of our work might contribute to our community most and developing strategies to continue in ways that don’t overburden the people we’re trying to serve. The factors to consider are varied, interconnected, and constantly shifting and we are a small team with limited resources. But we are definitely trying and I thought sharing some new details here about one part, how we tightened up the institutional survey, might be of particular interest.

If you haven’t already heard, the institutional survey is what we originally referred to as the survey for department chairs and program administrators. This is the keystone in the structure of the project’s public-facing data gathering effort. It’s where we’re asking core quantitative questions about who has been teaching and studying the history of the built environment in the United States over the past decade, what forms that work has taken, and the ways in which institutions have supported their faculty and students in the process. Our ability to share meaningful observations about the health of our field in the final report will necessarily rely on the amount and quality of the information you provide via this survey now.

I’m going to be honest with you here. Our concerns back in March about the pandemic leading to reduced response rates have unfortunately proven correct. And, more recently, doing the urgently important work necessary to increase equity in American life might also be leaving little mental space for doing something like our institutional survey. The result is that the current data set just isn’t as robust as it might have been during a more typical spring term. It’s understandable, but still not quite what will satisfy the project’s full potential.

So, what concrete steps are we taking to turn this survey into a task that you can more reasonably complete within the context of today’s chaotic living conditions? A task that will leave you feeling it was worth your investment of time and intellectual labor?

This is a visual representation of the SAH Data Project’s Institutional Survey showing the type and number of questions that have been retained. The base diagram shows all of the questions as originally distributed in the survey; each question is a separate cell in this diagram with questions that focused on change over time data represented as a trend chart and those that requested current “snapshot” data indicated with a camera. Removed questions have a gray semi-transparent layer here, retained questions do not. This question-by-question assessment process resulted in a revised Institutional Survey that is approximately 35% shorter than its original pre-pandemic iteration. Infographic by Sarah M. Dreller.

Last month’s process blog post by Advisory Committee chair Abby Van Slyck outlined one major change, which is to expand the criteria for who can complete the survey on behalf of their department. We’ve also set up open support times on Zoom so that anyone can drop in to ask questions and get help directly from me. We’re extending the closing dates for all the project’s surveys to give you a chance to track down the information you need. And we’re doing other more behind-the-scenes things, too, to make sure as many different kinds of people as possible hear what the SAH Data Project is about. But the part of all this that I’m especially excited about at the moment – and what I suspect might really help more of you contribute your voice now – is what the team has done over the past month to strategically reduce the density of the information we’re asking from you. In retrospect, we took a kind of “less is more” approach, auditing the survey question-by-question to identify and retain only those questions most likely to address the project’s fundamental focus on change over time. The newly released institutional survey has a much stronger emphasis on how enrollments, faculty and student demographics, and course offerings have evolved over the past ten years, data we hope we’ll be able to synthesize into descriptions of our field’s key academic trends.

Trimming back the survey to its most essential components was easier in some ways and harder in others. On the one hand, we were very grateful to those of you who have already completed this survey because your responses provided some very useful data about the paths that different kinds of respondents have been cutting through it. Things like who skipped which types of questions, how the wording of certain questions early on led to confusion later, etc. really helped us be strategic. On the other hand, the decision to keep some questions and cut others wasn’t as simple as mapping the preferences of past respondents. Rather, it was primarily about evaluating questions from the point of view of people like most of you, the survey’s potential future respondents, to determine whether the information we were likely to get from any given question would really add enough to the project to justify asking you to spend your time answering it. This was a tough thing to do, but the team ultimately decided that about twenty-five percent of the survey could be removed without jeopardizing the data that we really need.

I should add that we absolutely did the same thing meticulously, repeatedly in the fall, too. Our expectations calculus was different then, however. Everyone has more on their plates now, a whole lot more, in some cases. So, we’re offering a new leaner version of the institutional survey in hopes that you’ll give it a serious look. And, if you are in a position to complete it, we hope you find both it and the process of completing it substantive in all the most meaningful ways. Thank you, in advance, for your contribution.

Leave a commentOrder by

Newest on top Oldest on top